Dear community,

I am wondering how the zemax calculate the power of ray, which comes out of the DLL surface (RCWA model for a grating). In the DLL, data [30] represents relative energy of the ray. Here I assum it is diffraction efficiency, which is power flow. Besides, the output electric is also required to give in data [40-45]. Based on my test, the zemax detector seems to calculate the energy of the ray from DLL based on its square module of electric field instead of diffraction efficiency. However, when the diffraction efficiency of first order of light is high, e.g. 99.9%. The square module of electric field is usually larger than 1 because the k-vector changes. Therefore, I have normalize the electric field and multiple square root of the diffraction efficiency to achieve the right number on detector, which is contradictory to the law of wave propagation. Besides, If I did this, the detector energy will change again when this ray from DLL interact with next surface, which is a glass usrface with perfect AR coating. . Do you know what is happening?

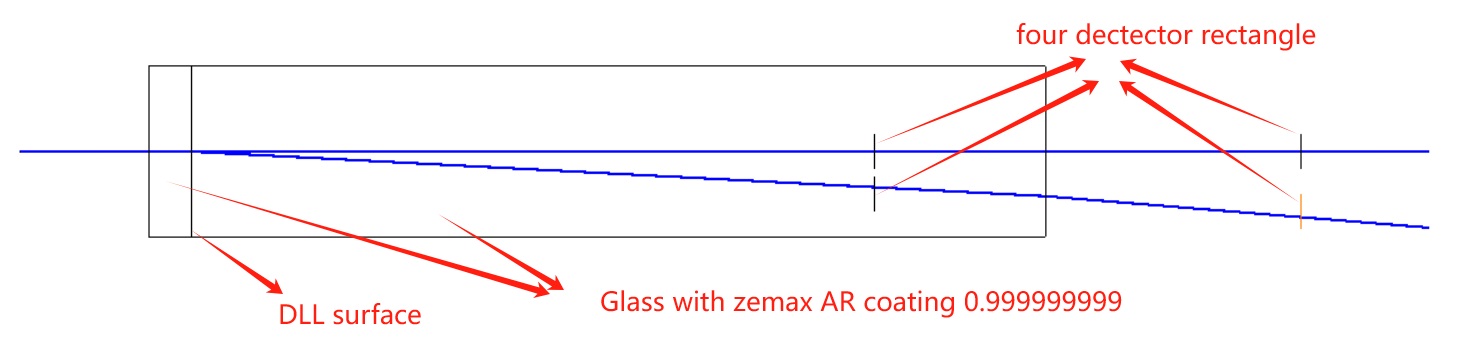

Here is my setup. The power on detectors inside glass is 99.941%, which is exactly same as the diffraction efficiency. The power on detector outside of glass is 99.864%. I also tested the AR coating. It is perfect for different incident angles and impossible to influence such 0.1% power variation.